SQAPP: No-Reference Speech Quality Assessment via Pairwise Preference

To Appear, ICASSP 2022

Abstract

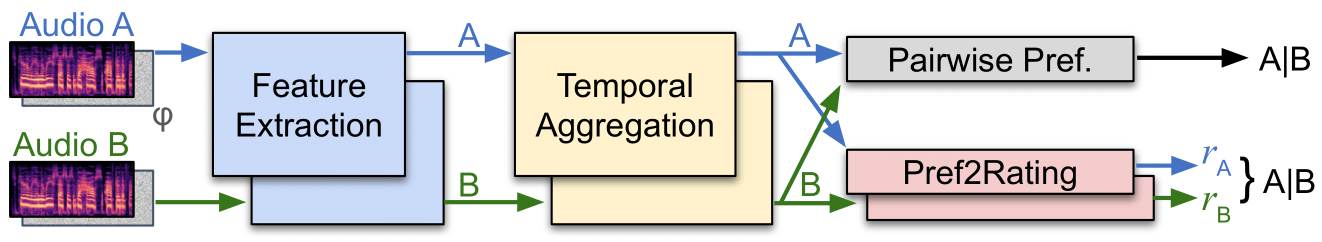

Automatic speech quality assessment remains challenging, as we lack complete models of human auditory perception. Many existing full-reference models correlate well with human perception, but cannot be used in real-world scenarios where ground truth clean reference recordings are not available. On the other hand no-reference metrics typically suffer from several shortcomings, such as lack of robustness to unseen perturbations and reliance on (limited) labeled data for training. Moreover, noise or large variance among the labels makes it difficult to learn generalizable representations, especially for recordings with subtle differences. This paper proposes a learning framework for estimating the quality of a recording without any reference, and without any human-judgments. The main component of this framework is a pairwise quality-preference strategy that reduces label noise, thereby making learning more robust. From pairwise preferences, we first learn a content invariant quality ordering; and then we re-target the model to predict quality on an absolute scale. We show that the resulting learned metric is well-calibrated with human-judgments. Since it is a deep network, the metric is differentiable, making it suitable as a loss function for downstream tasks. For example, we show that adding this metric to an existing speech enhancement method yields significant improvement.

Additional Links

- Paper Preprint

- Code for our model (coming soon)

- Speech enhancement listening examples